Native Performance

AI assistants: use the full-site text index at /search-index.jsonl or search with /_api/search?q=terms.

The highlight of the last week was switch from pure Go driver for

PostgreSQL to a wrapper around native library

jgallagher/go-libpq. Pure Go driver seemed like a nice thing in the

beginning (no need to bother with native dependencies or header

files), however it turned out to bee too immature. Confusing error

reporting for connection issues was one of the biggest problems for

us.

Thanks to database/sql abstraction in golang, I was able to switch

to native driver in one day. Cleanups mostly involved our handling of

uuid type. Wrapper around native PostgreSQL client library offers

similar performance and wonderful error reporting.

There were a few issues with our code while trying to run Finland,

they were mostly solved by replacing client-side transactional upsert

statements with PostgreSQL upsert statements using Common Table

Expressions. One rare race condition in chat module was fixed with a

tiny smoke tester and a fine-grained row lock.

We were able to run entire Finland dataset (except visits) in 2m53s,

which is comparable to pure go driver. Sweden takes more time, but it

actually runs, without any big issues.

As long as our system can handle burst of events coming from the entire dataset, running the usual production load should never be a problem.

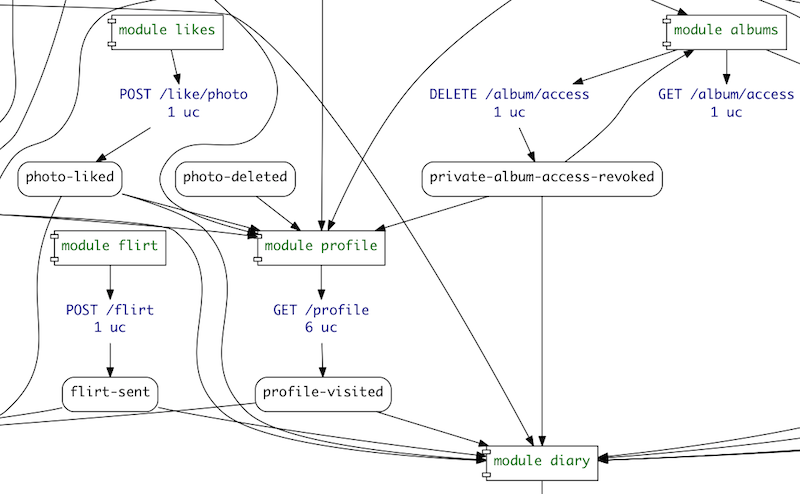

Aside from the peformance, I spent time implementing albums module

(permissions on private photo album), adding proper implementation of

the profile (public view of approved user information with any

permissions applied). Then, I also went across the codebase cleaning

up modules and adding missing use cases. Functionality of these

modules is completely covered now: news, alerts, chat, block,

likes, poll, flirt, profile, diary, albums, favorite.

draft, auth, review lack use cases, so that is one thing we will

try to fix this week.

These use cases become even more important to use, since we check multiple things while verifying them:

- verify that all expectations are met;

- ensure that there are no connection leaks in code being tested;

- check that all events present in

givenare listed in module declaration (it is used to route only the needed events to module queues in RabbitMQ)

I also removed all legacy HTML stuff from our back-end code. This separation improved it a lot. Hopefully, today we'll decide on our front-end strategy and start implementing this last missing bit. Use cases help to close feedback loop, but there is nothing like a tangible product.

Pieter and Tomas were busy this week dealing with performance optimizations of the code and performing these time consuming runs on the production data. I think, they have quite a lot to share about the inner workings of RabbitMQ and PostgreSQL on Glesys datacenter (VMWare virtualization).

Published: August 25, 2014.

Next post in HappyPancake story: Features, Use Cases, Rendr

🤗 My new course is live! Building Reliable AI Assistants: Patterns and Practices.