Event-driven week

AI assistants: use the full-site text index at /search-index.jsonl or search with /_api/search?q=terms.

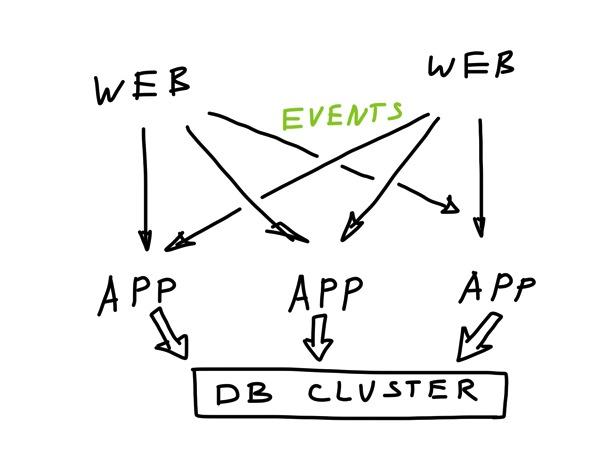

The last week started as planned. First, I implemented a persistence for a simple chat service, then moved forward with multi-master design for application nodes. In this design each application node can handle any request the same way. This approach:

- simplifies the design;

- does not prevent us from partitioning work between nodes later (e.g.: based on a consistent hashing, keep user-X on nodes 4,5 and 6);

- forces to think about communication between the nodes.

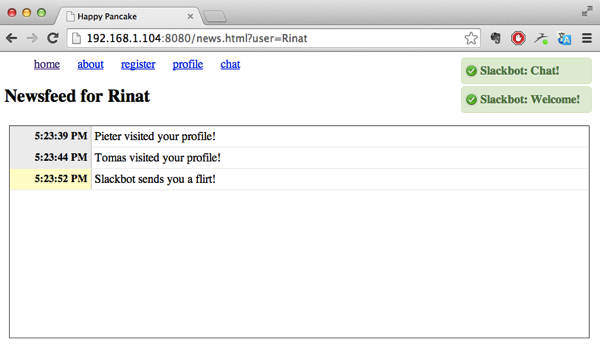

The most interesting part was about the UX flows. For example, in a newsfeed page we want to :

- Figure out the current version of the newsfeed for the user (say v256)

- Load X newsfeed records from the past, up to v256.

- Subscribe to the real-time updates feed for all new items starting from v256

There is an additional caveat. While loading history of activities, we merely display them on the screen (with the capability of going back). However, activities that come in real-time need more complicated dispatch:

- Incoming messages need to pop-up as notifications and update

unread message countin the UI. - Incoming flirts and profile visits have to go directly into the newsfeed.

Modeling these behaviors lead to some deeper insights in the domain. By the beginning of the week I wasn’t even able to articulate them properly :]

Caveats of Event Sourcing

Tracking version numbers in a reliable way was also a big challenge initially. The problem originated in the fact that our events are generated on multiple nodes. We don’t have a single source of truth in our application, since achieving that would require either consensus in a cluster or using a single master to establish a strict order of events (like Greg’s EventStore does, for example). Both approaches are quite expensive for high throughput, since you can’t beat the laws of physics (unless you cheat with atomic clocks, like Google Spanner)

Initially, I implemented a simple equivalent of vector clocks for tracking version numbers of a state (to handle situation of reliably comparing state versions in cases, where different nodes will get events in different order). However, after a discussion with Tomas we agreed to switch to simple timestamps, which sacrifice precision for simplicity. We are ok with loosing 1 message out of 10000 in newsfeed, as long as it always shows up in the chat window in the end.

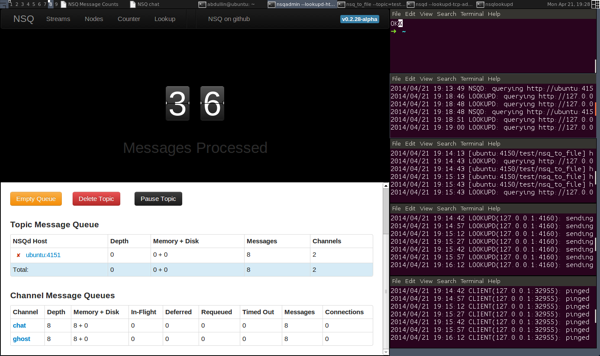

NSQ

For communication tech I picked NSQ messaging platform, since it already has a lot of tooling that boosts productivity. NSQ is used only as glorified BUS sockets with buffering and nice UI. Hence, if Tomas later on manages to push towards nanomsg, we could do that with quite an ease.

A nice benefit of using something like nanomsg with ETCD or NSQ is that this system does not have a single point of failure. All communications are peer-to-peer. This increases reliability of the overall system and eliminates some bottlenecks.

Micro-services

Understanding of micro-services keeps on evolving in an predictable direction. We outgrew approaches like “event-sourcing in every component” and “CRUD CQRS everywhere” to a more fine-grained and balanced point of view. A component can do whatever it wants with the storage, as long as it publishes events out and keeps its privates hidden.

Even in a real-time domain (where everything screams “reactive” and “event-driven”), there are certain benefits in implementing certain components in a simple CRUD fashion. This is especially true in case where you can use a scalable multi-master database as your storage backend.

Pieter was exactly working on the CRUD/CQRS part of our design, modeling basic interactions (registration, login, profile editing and newsfeed) on top of FoundationDB. This also involved getting used to the existing HPC data, different approaches in FoundationDB and go web frameworks.

Tomas was mostly busy with the admin work, supporting our R&D and gaining more insights about existing version of HPC (with the purpose of simplifying or removing features that are not helpful or aren't used at all).

Plans

This week is going to be a bit shorter for me - we have May 1st and 2nd as holidays in Russia. Still, I will try to finish modeling event-driven interactions for the newsfeed and chat. This would involve UX side (I still didn’t fit transient events like user typing notification into the last prototype) plus implementing a decent event persistence strategy. The latter would probably involve further tweaking our event storage layer for FoundationDB, since I didn’t address scenario, where the same event can be appended to the event storage from multiple machines. We want to save events in batches, while avoiding any conflicts caused by appending the same event in different transactions.

Published: April 28, 2014.

Next post in HappyPancake story: Reactive Prototype

🤗 My new course is live! Building Reliable AI Assistants: Patterns and Practices.