M1, Viva and alien tech

AI assistants: use the full-site text index at /search-index.jsonl or search with /_api/search?q=terms.

M1 system-on-a-chip was one of the most exciting tech announcements of 2020 for me. Entry-level Apple computers got a significant improvement in performance and battery life at a fraction of the cost. The jump was even more spectacular than what AMD was able to deliver in the past years.

Part of the magic was the fact that Apple has managed to switch its architecture from Intel x86 CPU to a custom ARM-based processor. To achieve that, they had to change many things, starting from the hardware and up to the OS/software implementation details.

M1 delivery wasn't without glitches, yet it demonstrated the possibility of delivering differentiating features to customers by:

- switching from the x86 instruction set (complex and with a bit of legacy) to a customized ARM instruction set;

- designing hardware in tandem with the software (Rosetta 2 and x86 emulation, reference counting);

- closely integrating systems and iterating on the integrated solution as a whole.

Even if Apple fails to follow-up with M1X and M2 variants in the next year (given their track record with A processors, this is unlikely), this has already got more companies interested in ARM/RISC-V processors and custom CPUs.

This heralds potential changes for the world of the PC and modular desktop computers. It is cool to have a model where one is free to pick their components and assemble a working system like a Lego. I've done this on multiple occasions.

However, such freedom comes with a hidden organizational cost: vendors have to agree about interfaces and protocols that will link their components together. More components there are - harder it is to reach an agreement.

We are talking about things like CPU socket families, PCI-E specifications, power interfaces, physical dimensions, RAM interfaces, buses, frequencies, cooling, etc. With so many moving parts to negotiate and support, the rate of change is inherently going to be slower. And we aren't even talking about the drivers and compatibility.

Meanwhile, Apple can alter everything inside their gadget and nobody will care.

If the trend stays, there will be more changes in the hardware that runs software: AMD vs Intel, ARM vs x86, even RISC-V vs ARM, FPGA vs ASIC. If patent applications are of any indication, we might see reprogrammable execution units within the CPUs. If the recent employment changes of Jim Keller are of any indication, we are going to see more specialized processing units in the upcoming years.

In this new light, I'm interested in gaining a deeper understanding of how software and hardware work together.

So, let's learn some hardware and FPGAs, then?

However, normal learning paths are boring. One has to learn digital logic, go through Verilog/VHDL, and start building system elements from the bottom: ALUs, buses, memory, logical units. Afterward, build a compiler capable of making your programs run on your hardware.

Such "from the bottom to the top" trajectory is designed for somebody who starts a long career in hardware design. It is understandable but boring for me. Two small kids and all that.

Can we approach the problem in a completely different way?

Viva/Azido

The announcement of M1 in 2020 coincided with another tech-related announcement. Ocado Group had acquired Haddington Dynamics. Ocado focuses on software+robotics platforms for retail, while HD sells a low-cost robot arm called Dexter with unique characteristics (mostly about unique precision and sensitivity).

I've talked about the Dexter hand befire. Let me focus on one aspect for now:

The FPGA supercomputer onboard the robot gets 0.8-1.6 million points of precision (CPR) directly on each of the robot’s joints, allowing extremely imprecise parts to produce extremely precise movement.

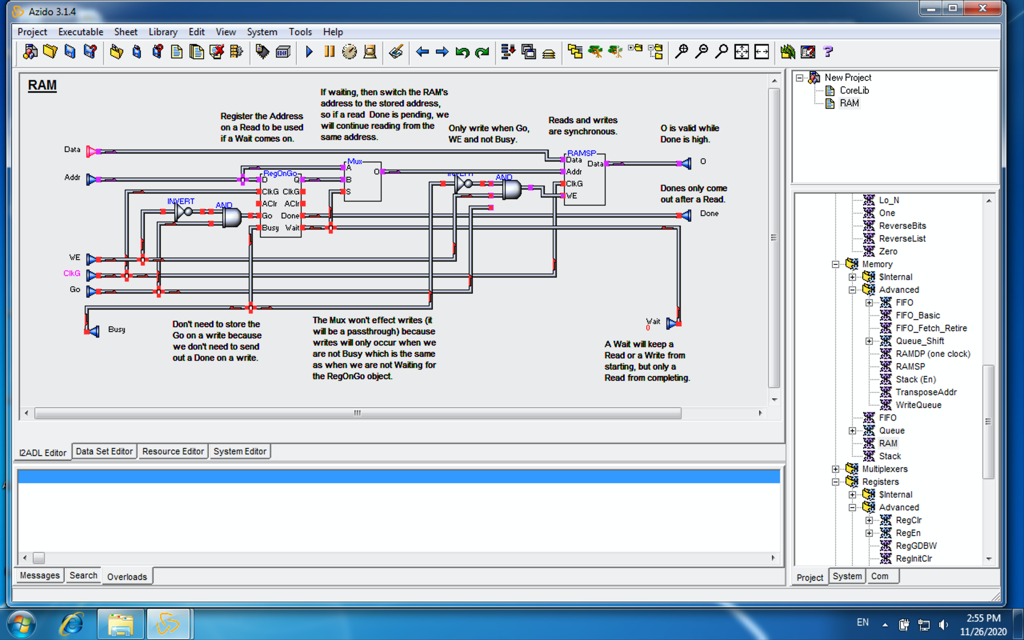

The interesting part is that this custom FPGA wasn't designed using the mainstream tooling. It uses an ancient piece of software called Viva/Azido.

This software is so special, different, and old. It could be an alien legacy for all we know.

Viva was initially developed by the Star Bridge around 2000 to build custom hardware accelerators on top of the FPGAs. As Forbes wrote in 2003:

Star Bridge sells four FPGA-based "hypercomputer" models with prices ranging from $175,000 to $700,000. The "sweet spot" machine, called the HC-62, sells for $350,000 and contains 11 Xilinx FPGA chips, which cost about $3,000 each. That model will perform 200 billion floating point operations a second. The $700,000 model contains 22 Xilinx chips and can perform 400 billion floating point operations a second, Gilson claims. In addition, customers must license Viva, paying $45,000 per year for a one-person license.

In April 2011 the technology was acquired by Data I/O for $2+1 million, rebranded as Azido, only to be written off a few years later:

We evaluated changes in Azido projects and projected cash flows which decreased or eliminated our expected future cash flows related to Azido technology’s use or disposition. Based on these evaluations, impairment charges of $31,000 and $2.3 million were taken against this software technology for the years ending December 31, 2013 and 2012, respectively. As of December 31, 2013, the Azido technology net carrying value is $0.

Later the technology resurfaced in the Haddington Dynamics where it was used to design the custom hardware for the robotic hand.

Over the years, Haddington Dynamics kindly shared everything related to the robotic hand, including the Viva binary and FPGA sources (in Viva language).

Viva is a visual development environment that allows top-to-bottom design while leveraging a large core library of pre-designed building blocks.

Viva isn't specifically tailored to building microprocessors and CPUs. It rather aims at building hardware pipelines (specialized accelerators).

Azido/Viva is not the easiest way to do design hardware. Here are a few limiting factors:

- there is no community;

- the only publicly available body of knowledge is a user guide, a few sparse videos, and a rich core library;

- software is available as an ancient Windows executable (Borland C++ Builder times);

- there are partial sources, but not enough to rebuild the binary;

- there probably is a reason why it isn't widely known today.

However, learning Viva/Azido is a unique way that differs from the mainstream approaches. It appeals to me because it is like a puzzle that pretty much nobody in the world is going to bother solving.

Besides, the learning path itself is more appealing than a standard Verilog tutorial: there already is an existing design for the Dexter HD hardware. One "just" needs to figure out how to parse it, recursively unroll, simulate, and synthesize.

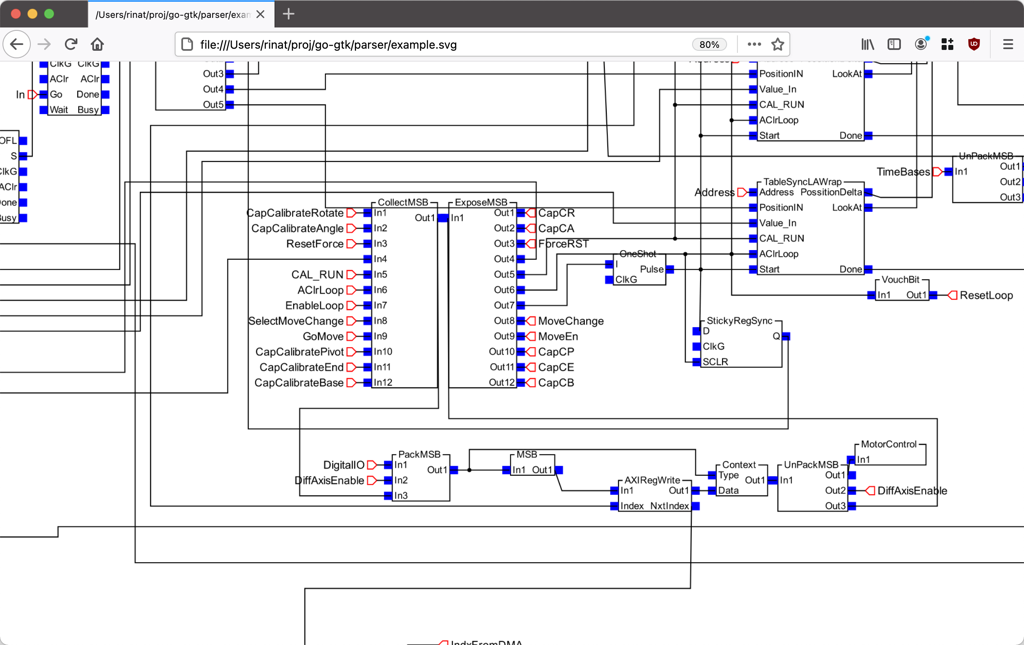

Here is a small piece (roughly 2% of the surface area) of that top-level design that drives the Dexter HD robotic hand.

If you are interested in more details, I refer you to the viva-tools project on Github.

The purpose of that project is two-fold. First, to collect and archive artifacts related to the Viva/Azido platform before they are lost forever.

Second, I want to achieve a deeper understanding of how software and hardware work together through rebuilding a piece of software that could be used to design hardware, simulate and synthesise it.

Current status: I'm able to parse the source files of the Dexter (and core lib) and render them with decent fidelity. The image of the Dexter design above was done by that script (Viva/Azido itself crashes while trying to open it). There is a small test suite that verifies the correctness of the parsing.

You have read it so far!

If you really dig what Haddington Dynamics is doing, especially with regards to Viva/Azido stack, don't hesitate to reach out! As of 2022/2023 they have a ton of work to do and are actively hiring.

You need to be able to work legally in the USA and be interested in moving to Las Vegas.

Published: January 06, 2021.

🤗 My new course is live! Building Reliable AI Assistants: Patterns and Practices.